- Dynatrace Community

- Ask

- Open Q&A

- Re: Maintenance Windows for Windows OS Services Expected Behavior

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Pin this Topic for Current User

- Printer Friendly Page

Maintenance Windows for Windows OS Services Expected Behavior

- Mark as New

- Subscribe to RSS Feed

- Permalink

24 Mar 2023 02:06 PM

Hey everyone, how are some of you putting windows os service availability alerts into a maintenance window? Through some testing I have found that the problem card for windows services stopping is associated with a custom device and each os service seems to get it's own custom device. Creating a maint window for the host where the service runs on does not supress alerting because the maintenance window is tied to only the host AND the problem is tied to the custom device, at least that seems to be what I am coming up with in my testing.

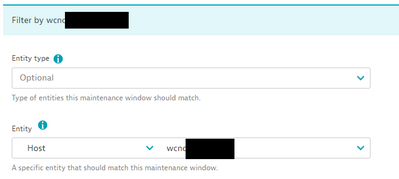

My first test:

- Created a maint window for a single host, using the entity filter of host and using the hostname, also configured the maint window for 'Detect problems but don't alert'

- Confirmed host was in the newly created maint window

- Stopped a windows service

- Saw the event show on the host page AND custom device for the service stopping. Event did not have the maint window ID tied to it

- Noticed a problem card created for stopped service. Problem card did now show as under maintenance

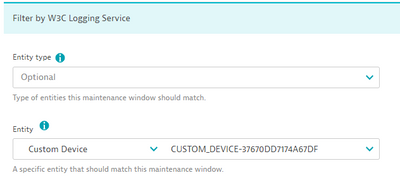

My second test:

- Created a maint window for the custom device that the windows os is connected to, also configured the maint window for 'Detect problems but don't alert'

- Confirmed host is not in the newly created maint window, expected becasue the maint window has only for the custom device

- Stopped the windows service

- Saw the event show on the host page AND custom device for the service stopping. Event did have the maint window ID tied to it (different then first test)

- Noticed a problem card created for stopped service. What is different here is that problem showed as under maintenance. Once the maint window expired the alerting triggered

Overall, it seems the problem card for windows services stopping is at the custom device however, dynatrace is not putting the pieces together and supressing the alerting if you config maint windows for the host in which the service runs on. This is very problematic for obvious reasons. We can't possbily be expected to know for all thousands of our maint windows we configure every month, all the windows services that have been setup to be monitored then add them into the maint window config for the custom devices.

Is someone able to confirm what I am seeing here? This is a major issue for us and I imagine for anyone monitoring windows os services, just want to be sure I have my facts straight here and not missing something.

NOTE, I am looking for someone to confirm how dynatrace acts in these situations, I'm not looking for someone to say something like 'it should do that or should do this'. I only care about what it is actually doing today.

maint window used during first test

maint window used during second test

- Labels:

-

maintenance window

-

problems classic

- Mark as New

- Subscribe to RSS Feed

- Permalink

24 Mar 2023 07:20 PM

I want to also add that this issue seems to be in situations where the os service rule is setup at the hostgroup level OR the host level. If setup at the env level then this does not seem to be an issue.

- Mark as New

- Subscribe to RSS Feed

- Permalink

04 Apr 2023 01:45 PM

@sivart_89 interesting observation about where the OS Service is configured (host, hostgroup, global). I wonder why that is, doesn't make any logical sense to have them act differently.

Thanks for responding to my other post and directing me here.

- Mark as New

- Subscribe to RSS Feed

- Permalink

04 Apr 2023 02:08 PM

Thanks for posting on that, reminded me I need to look at it again. A colleague of mine noticed recently that even the rules setup at the env level are impacted. I went back and looked at our netcool logs and see that for some os service events we did receive the tags at the host level (expected) but for others we did not. We instead received the tag at the custom device level for the os service (we do have an auto tag rule that tags the name of the custom device)

So slight correction to my previous statement. Nonetheless there is an issue that should be fixed.

- Mark as New

- Subscribe to RSS Feed

- Permalink

01 Nov 2023 12:48 PM - edited 01 Nov 2023 12:53 PM

Related requirement here : put in maintenance all what's running on a given host, including OS Services.

Our solution for Host's, PG's, PGI's, Service's : add a tag <onHost:detected host name> on them. Then create a MW scoped on this tag.

Problem : I cannot find a way to also tag "OS Services" aka "CUSTOM_DEVICE" with <onHost:detected host name>.

Ideas ?

- Mark as New

- Subscribe to RSS Feed

- Permalink

01 Nov 2023 01:23 PM

Your approach of applying the hostname tag to hosts, pgis, services, etc is exactly how we were doing it as well.

The problem with this auto tag example provided in the link is that you have to explicitly spell out each server name which means we would have in our env 6k+ tags, each with a unique hostname. You probably can't even have that many tags nor should anyone be expected to do so. In addition to that you would need to build into your new server build process to have this tag applied automatically when a new server is built. All of this is not practical.

I could be missing something but we also chatted with a dynatrace specialist and never found a way to have 1 auto tag rule to dynamically populate the hostname value to be the host in which the OS service runs on. Because of this a colleague of mine built out a python script that runs daily to add the tag, our maint windows now suppress OS service alerts because the custom device has this hostname tag.

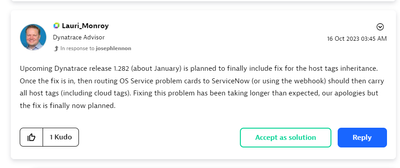

OS services has been nothing but a mess for the last several months (my opinion) when things were changed with the OS service being it's own custom device (i believe with cluster version 1.261) and at some point, not being suppressed via a maintenance window when you use only the host filter in the maint window (not using a tag). If you aren't aware of this post below it may shed some light on some upcoming changes. I think the change coming in Jan with cluster version 1.282 will fix this issue.

- Mark as New

- Subscribe to RSS Feed

- Permalink

01 Nov 2023 05:45 PM

there will be future enhancements to the linkages of OS Services to the associated host, but for now its all classified as a custom device.

- Mark as New

- Subscribe to RSS Feed

- Permalink

01 May 2024 02:45 PM

Late to the party: I too have "requested" that this be changed to include ALL child entities directly and distinctly associate to the HOST being scheduled. This includes Disks, Services, PG Instances, etc. But should NOT include PG's that may or may not belong to multiple hosts.

Featured Posts