- Dynatrace Community

- Ask

- Alerting

- Problem analysis for HTTP monitor

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Pin this Topic for Current User

- Printer Friendly Page

- Mark as New

- Subscribe to RSS Feed

- Permalink

09 Mar 2020

10:36 AM

- last edited on

29 Aug 2022

12:43 PM

by

![]() MaciejNeumann

MaciejNeumann

Hello Guys,

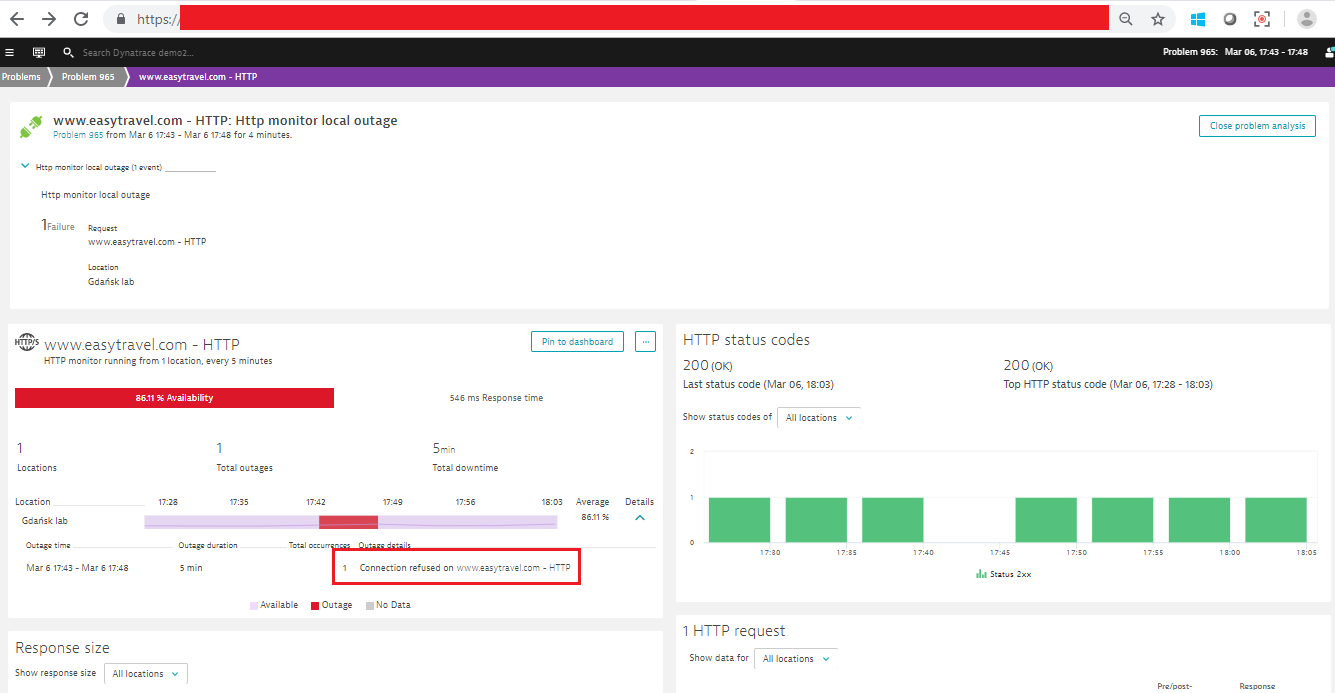

How can I troubleshoot below problem further? Like what are the services impacted, what is exact root cause of problem. Please guide.

Regrads,

AK

Solved! Go to Solution.

- Mark as New

- Subscribe to RSS Feed

- Permalink

09 Mar 2020 10:47 AM

it basically means that WebServer refused connection that was executed by robot. It may mean that WebServer was unavailable because of some reason.

Sebastian

- Mark as New

- Subscribe to RSS Feed

- Permalink

09 Mar 2020 11:01 AM

Hello Sebastian,

Thanks for comment.

But when web-server is unavailable can we go and check which web-server is unavailable from this page itself for HTTP monitor and why?

Thanks

- Mark as New

- Subscribe to RSS Feed

- Permalink

09 Mar 2020 01:04 PM

For browser monitor there is plenty of details available, for simple http monitors there are informations you see there. I don’t see there any option to drill down to PurePaths as well. You should be able to find requests from synthetic location using client IP (http monitors is executed on ActiveGate inside your architecture so IP address is known, it can be tracked as request attribute as well for searching and filtering purposes) in service that was responsible for WebServer unavailability.

Sebastian

- Mark as New

- Subscribe to RSS Feed

- Permalink

10 Mar 2020 03:25 PM

You mean to say, user session for active gate IP which is acting as a client to run HTTP monitor?

- Mark as New

- Subscribe to RSS Feed

- Permalink

10 Mar 2020 05:41 PM

There will be no user session for http monitor. But you can find purepaths (server side) related to them. If you know AG ip, you can find by it proper requests and you will know what happened.

Sebastian

- Mark as New

- Subscribe to RSS Feed

- Permalink

09 Mar 2020 03:48 PM

Hi Akshay,

Another potential solution I have seen before when working with http monitors is to hit the specific web servers individually. That way, when a http monitor fails, you can identify which server was impacted immediately.

Thanks,

Michael Oxendine

- Mark as New

- Subscribe to RSS Feed

- Permalink

10 Mar 2020 05:05 PM

Hi Michael,

How you have achieved this? Can you some sort of example.

Regards,

AK

- Mark as New

- Subscribe to RSS Feed

- Permalink

10 Mar 2020 05:12 PM

Hi Akshay,

If you are able to access the web servers directly rather than go through the domain name you can achieve this.

Example:

https://servername:port/web/path

- Mark as New

- Subscribe to RSS Feed

- Permalink

10 Mar 2020 05:28 PM

Thanks Michael,

But that host must have Oneagent installed. In our case that host is not having Oneagent. So no deep visibility as what went wrong and even "Assign HTTP monitor to web application" wont work I believe. Correct me if I'm wrong.

- Mark as New

- Subscribe to RSS Feed

- Permalink

10 Mar 2020 05:43 PM

Without one agent you can only pass some header to request and find option to log those requests with special header to some extra log file. In such case you can push logs somewhere and do alerting based on this but this is really ugly workaround

If you will run monitor directly to each WebServerwith skipping load balancer, each http monitor will inform you about responses of each of them. Failing one of http monitor will mean that this particular WebServeris down

- Mark as New

- Subscribe to RSS Feed

- Permalink

10 Mar 2020 06:48 PM

Hi Akshay,

If we do it the way of hitting the servers specifically, the only additional information that we can provide is going to be what server is having an issues through the http monitor. So if you have server1 and server2, and server1:443/webapp fails all its checks and server2:443/webapp succeeds all its checks, then you know the issue is going on which server, or both. Other than that, finding out in depth metrics to correlate with a synthetic will require a oneagent. (I.e. services, root cause) Getting that sort of information would also be aided by having the synthetic be a browser monitor, so you could coordinate purepath data from the front end user (the synthetic) with back end performance.

Thanks,

Michael Oxendine

- Mark as New

- Subscribe to RSS Feed

- Permalink

09 Mar 2020 10:48 AM

I suggest hiding URL’s on screens because you have there whole path with environment ID. This is something that should be confidential for you.

Sebastian

- Mark as New

- Subscribe to RSS Feed

- Permalink

09 Mar 2020 10:52 AM

Thank you for noticing this @Sebastian K.! I've censored the URL on the screenshot for @Akshay S.

- Mark as New

- Subscribe to RSS Feed

- Permalink

09 Mar 2020 11:14 AM

Thanks Sebastian for pointing this out and Maciej for hiding it. I'm using demo URL and thought that, it would be same for all user hence not hided while posting a question.

- Mark as New

- Subscribe to RSS Feed

- Permalink

09 Mar 2020 12:53 PM

It’s the same indeed but it’s always better to hide such informations in general 🙂

- Mark as New

- Subscribe to RSS Feed

- Permalink

09 Mar 2020 01:13 PM

@Akshay S. I would recommend using the browser monitor instead of the HTTP monitor as it will provide you with a better in depth analysis of your synthetic test, much like the pure path does. I have answered your other question and provided you an explanation between the 3 monitoring options. I will also include a screen shot on them with a water fall analysis so you can see the benefit of Browser monitors vs HTTP monitors.

Featured Posts