- Dynatrace Community

- Learn

- Product news

- Node-name based Kubernetes node ID calculation

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Printer Friendly Page

With release 1.289 we introduced a new way to calculate the ID for Kubernetes node monitored entities for new tenants. This article explains the reasons behind the new calculation, minimum version requirements, visible effects, as well as some background information.

Update: We decided last minute to apply the new node-name based ID calculation only for new tenants. If you experience the problem described below with the old ID calculation, please approach support.

Why is there the new node-name based ID calculation?

Up to release 1.288 we used the nodes' systemUUID to generate the ID for Kubernetes node entities in Dynatrace. We discovered that there are situations (i.e. OpenShift clusters running on a PPC64LE architecture), where the systemUUID is not unique. That caused that not all nodes were visible in the user interface. Moreover, if the same systemUUID existed on different clusters, it was not predictable, to which cluster such a node would have been assigned. By converting the calculation, we are also future-proof when it comes to integrating other data sources such as Open Ingest.

Therefore we decided to implement an ID calculation based on the Kubernetes node name and the cluster id. As the name identifies a node within one Kubernetes cluster, two nodes cannot have the same name at the same time [See Nodes | Kubernetes]. Thus in combination of the node name and the cluster id the uniqueness of the calculated IDs is guaranteed. This calculation method is effective for new tenants only and can be enabled for existing tenants upon request.

Is a Kubernetes cluster affected?

If there are less Kubernetes node entities visible in Dynatrace than there actually are in the Kubernetes cluster, non-unique systemUUIDs are likely the problem.

List the systemUUIDs of the Kubernetes cluster:

kubectl get nodes -o=json | jq | grep systemUUID | sort

Get the number of nodes:

kubectl get nodes -o=json | jq | grep systemUUID | sort | wc -l

Get the number of unique systemUUIDs:

kubectl get nodes -o=json | jq | grep systemUUID | sort | uniq | wc -l

If the number of nodes and the number of unique systemUUIDs does not match, the cluster would have been affected by the incorrect node ID calculation. In such a case please approach support for assistance.

We have just noticed, that there are variants of Kubernetes installations (in the specific case Alibaba Cloud), which do not provide a systemUUID. Although Alibaba Cloud is not officially supported by Dynatrace, it is worth a try to switch to the alternative node-Id-calculation. Please approach support for assistance in that case, too.

What are the impacts of the new ID calculation?

1. New KUBERNETES_NODE entities

During the transition of the calculation method, you will see twice as many Kubernetes nodes. This is expected behavior. As their names will remain the same, you will see a pair of Kubernetes nodes entities for each node name, where one ceases to be monitored when the transition took place, whereas the second entity starts to be become visible and be used as a reference for metrics, events, logs lines, etc.

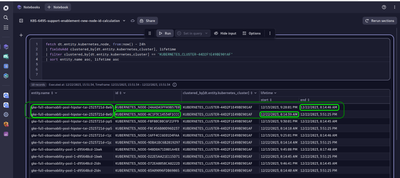

With the following DQL query you can see those duplicate nodes in Grail™:

fetch dt.entity.kubernetes_node, from:now() - 24h

| fieldsAdd clustered_by[dt.entity.kubernetes_cluster], lifetime

| filter clustered_by[dt.entity.kubernetes_cluster] == "<a KUBERNETES_CLUSTER id>"

| sort entity.name asc, lifetime asc

2. Monitoring gap

Changing the node id calculation method requires a renegotiation of which ActiveGate will be responsible for monitoring the Kubernetes cluster. This gap could last for 1 to 3 minutes and is expected behavior.

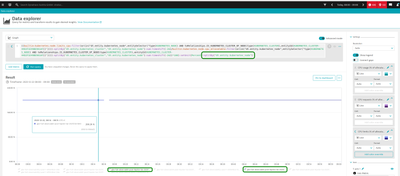

In the following example you see the gap and will recognize that there are two names with the same node name. The slightly different color in the chart indicates that two different Kubernetes node entities (with the same name) are stored to the metric.

3. Metric events etc. based on the Kubernetes node entity ID

If you have any metric events based on the entity ID, those events won't work anymore. Instead of using the entity ID we recommend using the node name in combination with the cluster id, instead. To avoid gaps in those metric events, we recommend changing such events. (Learn more about metric events here.)

Minimum version requirements

- ActiveGate >= 1.273

- OneAgent >= 1.272

What happens if the requirements are not met?

- Some problems might point to a non-existent KUBERNETES_NODE entity, if the ActiveGate is too old.

- No relationship between the HOST entities and the KUBERNETES_NODE entities are stored, if the OneAgent is too old or a not longer supported Dynatrace Operator version is used.

Opt-In

If you experience missing Kubernetes node entities as described above and the version requirements are met, please contact Support, how can assist you in opting in for the new node-name based node ID calculation. Note, that this opt-in affect the entire tenant.

Troubleshooting

Problems related to the node-name based ID calculation could e.g. be:

- broken links to node entities

- no node information available where the information is expected

In this case, please verify whether the minimum version requirements are met.