- Dynatrace Community

- Contribute

- Custom Solutions Spotlight

- Configuration-less Advanced SSL Certificate Check Plugin

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Pin this Topic for Current User

- Printer Friendly Page

- Mark as New

- Subscribe to RSS Feed

- Permalink

12 Jan 2021

02:00 PM

- last edited on

23 Mar 2023

10:37 AM

by

![]() andre_vdveen

andre_vdveen

There are multiple variants how to validate SSL certificates and alert on expiry. I've taken a look at all of them and missed a lack of automation. I therefor created another one that hopefully overcomes some of the limitations and is easier to use in large environments.

As we are not having this feature out of the box for a long time this might be useful.

Summarizing the various attempts and threads from:

SSL Certification expiration checks out of the box - Details? (@Larry R.)

Does Dynatrace monitor SSL certificate validation (@Akshay S.)

Monitor SSL certificate expiry and generate alert (@Dario C.)

(also the contributors @Július L.'s OneAgent extension and @Leon Van Z.'s ActiveGate extension)

What is different about this plugin?

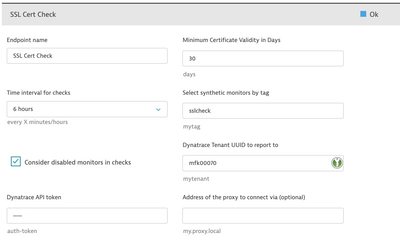

- It doesn't need any configuration for hosts/sites that are checked. The endpoints are determined dynamically from already configured (and tagged) synthetic monitors. Add/remove monitors and they will be checked automatically without any additional configuration needs.

- Error events (about expiring certificates) are posted/attached to the synthetic monitor, where one would expect it, not to custom devices

- Check intervals can be adjusted in long timeframes - no one needs to check certificate validity every minute or even hour.

- It is an active gate remote plugin so it can communicate with the Dynatrace API via the active gate.

- It doesn't consume any licenses for custom metrics!

Where to find it?

You can find the plugin in my personal github repository.

Solved! Go to Solution.

- Mark as New

- Subscribe to RSS Feed

- Permalink

12 Jan 2021 02:23 PM

NICE! You sir, @Reinhard W. ROCK! 🙂

- Mark as New

- Subscribe to RSS Feed

- Permalink

12 Jan 2021 04:37 PM

Thanks @Larry R., hope you can use it. Feedback always welcome!

- Mark as New

- Subscribe to RSS Feed

- Permalink

12 Jan 2021 02:26 PM

Thanks for sharing! Our services team created a similar one but instead of using synthetic tests as input it took a csv as well as automatically discovering all https endpoints from incoming/outgoing service calls.

That one does require some configuration though and does use DDUs to track the endpoints.

Mike

- Mark as New

- Subscribe to RSS Feed

- Permalink

12 Jan 2021 04:41 PM

Using the synthetic monitor configuration seemed logical. Can't rely too much on the services as they are more likely to change and eventually there are no services and one would still perform synthetic tests (or test something that is not even covered by DT on the backend).

Though I use the detected services approach to automatically configure RUM applications at scale...waiting for DT to bring back automatic application detection 🙂

- Mark as New

- Subscribe to RSS Feed

- Permalink

14 Jan 2021 03:53 PM

Thanks for sharing !

I will try it.

The best solution (in my opinion) was to develop our own AG plugin (based on ssl and openssl library) in order to be able to manage our own groups of certificates and the associated alert thresholds. (I don't really like to use synthetics for this kind of monitoring).

The concern also (for me) to use events is that the problem will be automatically closed after 15 minutes (max 120 minutes) and therefore would not be compatible with an execution schedule higher than 120 minutes (or we have to manage this in the script and many events will be created every day until the certificate is renewed)

- Mark as New

- Subscribe to RSS Feed

- Permalink

14 Jan 2021 04:55 PM

Hi @Aymeric B.,

actually this AG plugin uses OpenSSL in the background to fetch the certificates. Any solution that gets certificates from remote servers is some kind of synthetic monitoring. Unless you do cert checks locally on the filesystem (which is hardly controllable on large heterogeneous environments) IMO.

There is no issue with problems closing after 15 minutes. You can actually set the timeoutduration higher and also simply refresh the problem when needed. So my approach is to set the timeout to longer than the check interval, then the problem will be simply refreshed and no additional ones will be created.

- Mark as New

- Subscribe to RSS Feed

- Permalink

14 Jan 2021 06:03 PM

Hi @Reinhard W.

We had specific needs for this AG plugin (management of assignment groups for the ticketing tool, different thresholds according to the type of certificates, ...).

Regarding the events management, the documentation indicated that the maximum timeout was 120 minutes for an event , so i have decided to configure a custom event in order not to manage a situation where the execution interval would be greater than the maximum timeout.

(but you're absolutely right, it's indeed possible to manage the refresh of the event in the script, maybe I've been a little lazy.^^)

- Mark as New

- Subscribe to RSS Feed

- Permalink

14 Jan 2021 06:37 PM

Hi @Aymeric B.

you just gave me a great idea on how to do the refresh better, will include that in my plugin.

For the different thresholds for different groups of certificates. This could be covered with different instances of the plugin. In case you know on which sites (synthetic monitors) you have which certificates, you could assign different tags in DT and then the different instances of the plugin would pick up those sites with separate thresholds.

- Mark as New

- Subscribe to RSS Feed

- Permalink

14 Jan 2021 08:01 PM

Hello @Reinhard W.

In how to use the below sentence written.

"that is able to access the sites you want to monitor."

I am a bit confused about this, thus need your assistance to clear my concept before using the plugin. We have a few eChannel applications monitoring with the Synthetic Browser.

Do you mean the Environment AG should be able to reach that publically available DNS?

Regards,

Babar

- Mark as New

- Subscribe to RSS Feed

- Permalink

21 Jan 2021 10:05 AM

Hi @Babar Q.,

yes, the AG that is executing the Plugin must be able to reach the public available DNS/Hosts/Sites to check the certificates.

This is done independently of the synthetic monitors (that you probably let execute on Dynatrace's infrastructure).

Reinhard

- Mark as New

- Subscribe to RSS Feed

- Permalink

21 Jan 2021 01:02 PM

Hello @Reinhard W.

Thank you for the confirmation. On the uploading of the extension, I received the message that it will consume the DDU.

Is this true or was it just a default message?

Regards,

Babar

- Mark as New

- Subscribe to RSS Feed

- Permalink

21 Jan 2021 01:03 PM

This plugin doesn't create any custom metrics, only events so it will not consume any DDUs.

- Mark as New

- Subscribe to RSS Feed

- Permalink

15 Jan 2021 10:29 AM

Thanks for the feedback (@Aymeric B.). I've added functionality to the plugin so that it now also checks previously created problems/events and if their state is still satisfied (outside of the normal long cert check interval). It will so so by fetching the event/problem and check if it is close to expiry (the max. 120 minutes). It this is the case it will check those hosts and make sure the problem is refreshed, or if the failure condition doesn't exist anymore close the problem.

Additionally I added proxy support for the plugin. This can be useful in cases where direct access to the sites to check isn't possible. The plugin will only use TLSv1.2 for security reasons.

- Mark as New

- Subscribe to RSS Feed

- Permalink

31 Mar 2021 10:16 PM

@r_weber this looks good, it makes sense to leverage the Synthetic checks. However, there are cases where a client doesn't use Synthetic, and being able to add domains/FQDNs manually still makes sense. I guess for those, I'll continue to leverage @leon_vanzyl's plugin 😎

Question: does the plugin work for SaaS and Managed? I've set it up on a Managed instance, but I'm not sure what to add to the 'Dynatrace Tenant UUID to report to' field, the env. ID? Incl. or excl. the /e/? Or the entire env. URL, incl. the https://FQDN/e/?

- Mark as New

- Subscribe to RSS Feed

- Permalink

01 Apr 2021 02:17 PM

Hi @andre_vdveen ,

you can always configure your synthetic monitors (but disable them) and the plugin would pick them up as well 🙂

Re SaaS/Managed:

As the plugin uses the remote execution engine it pushes the notification vie the AG API. This API is always https://localhost/e/<tenantid> regardless of managed or saas this API urls is the same. so you only need the tenant ID & API key.

- Mark as New

- Subscribe to RSS Feed

- Permalink

12 Apr 2021 07:51 PM

Hi @r_weber thanks for the feedback, didn't realize it will still work even with the monitors disabled! 🙂

I've deployed the plugin as I normally would, but I couldn't get it working, no matter what I tried. Upon checking the logs, I noticed this:

Token is missing required scope. Use one of: ExternalSyntheticIntegration (Create and read synthetic monitors, locations, and nodes)

Turns out the token permissions on the documentation need some updating, as it only mentions:

- 'Read synthetic monitors, locations, and nodes'

- 'Access problem and event feed, metrics, and topology' and

- 'Data Ingest, e.g. metrics and events'

After adding the missing token scope, it is working now - very cool, thank you! 😉

- Mark as New

- Subscribe to RSS Feed

- Permalink

14 Apr 2021 05:14 PM

Hi @andre_vdveen ! Thanks for pointing that out! I've corrected the token scope requirements!

- Mark as New

- Subscribe to RSS Feed

- Permalink

20 Apr 2021 10:03 AM

Hi @r_weber,

You said the Synthetic monitors can be disabled and the certificate checks should still work, correct?

Perhaps I'm doing something wrong, but if I have a Synthetic test set up but disable it, the certificate check never seems to execute/check that monitor, even though the threshold value (85 days till expiry, threshold set to 90 day) is valid?

If I enable the Synthetic monitor, let it run once, and then disable it, it seems to work OK (it raises a problem for the certificate's expiry date) but I can't confirm if it will close the problem once the certificate is updated while the monitor is disabled.

- Mark as New

- Subscribe to RSS Feed

- Permalink

20 Apr 2021 11:35 AM

Hi @andre_vdveen ,

sorry I checked my code, you are right. It used to work with disabled monitors as well bu I restricted that to enabled ones only to the large amount of monitors I was working with.

Give me a bit, I will add an option to make this configurable.

Reinhard

- Mark as New

- Subscribe to RSS Feed

- Permalink

20 Apr 2021

12:20 PM

- last edited on

28 Mar 2023

07:46 PM

by

![]() Ana_Kuzmenchuk

Ana_Kuzmenchuk

Hi @andre_vdveen,

Please see updated version (1.8) (deployment package), this introduces an option in the configuration UI to also consider disabled monitors.

Reinhard

- Mark as New

- Subscribe to RSS Feed

- Permalink

21 Apr 2021 06:52 AM

Hi @r_weber,

Fantastic! It now works as explained 😄

Thank you for your work and effort and for the support with the plugin and for answering all my questions 😉

- Mark as New

- Subscribe to RSS Feed

- Permalink

21 Apr 2021 09:46 PM

Hi @andre_vdveen ,

you are very welcome! Thanks for your feedback and glad it's useful and being used!

- Mark as New

- Subscribe to RSS Feed

- Permalink

16 Sep 2021 10:22 AM

Hi @r_weber

I would like to configure the SSL cert expiry via dynatrace tool, i am not familiar with this tool

Currently client is not using any Synthetic monitor .

I have installed a Linux AWS instance and configure the ActiveGate way . It is visible on the Dynatrace tool. Further i have tried to upload your cert check plugin and try to configure the task getting

Configuration Error (Extension Doesnt Exist on given host)

I am not sure what is the Endpoint name . Kindly guide me..

- Mark as New

- Subscribe to RSS Feed

- Permalink

17 Sep 2021 11:09 AM

Hi @Sankar ,

you probably forgot to also upload the plugin to the ActiveGate itself? It needs to be added via the UI (so that you can configure it in the UI), but also to the ActiveGate plugin directory (which is likely /opt/dynatrace/remotepluginmodule/plugin_deployment).

Please copy the .zip archive to that directory and unzip it. Then this error should go away.

/opt/dynatrace/remotepluginmodule/plugin_deployment$ ls -1

custom.remote.python.certcheck

custom.remote.python.certcheck.zip

- Mark as New

- Subscribe to RSS Feed

- Permalink

24 Dec 2021 09:17 PM

Very nice!

As a constructive comment and posting this on decembre 24th here is my wishlist 🙂

Option to configure per test configs with the tags (proxy, cert validity days, problem generation-yes/no).

Option to run on a synthetic activegate as our network rules are already set on those.

I wish you all a great holiday season!

- Mark as New

- Subscribe to RSS Feed

- Permalink

31 Dec 2021 02:00 PM

Hi @AlanZ ,

If I get you correct: you want to add tags to a synthetic monitor config that are then used by the plugin for it's execution? E.g. tag them with cert_days: 30, proxy: my.proxy.com:3128, create problem: yes and upon execution these values are used during the check?

If you want to run the plugin on an synthetic AG this AG must support plugin execution. That is not a limit of the plugin itself, only a configuration setting on the AG to support synthetic AND plugin execution.

- Mark as New

- Subscribe to RSS Feed

- Permalink

31 Dec 2021 11:09 PM

Yes exactly as you described, tags on synthetic tests themselves so that monitoring can be configured independently for each test as needed.

Thanks for the tips on the AG, not so sure a synthetic activegate can be enabled for plugins but I'll continue my research. Any hints appreciated.

- Mark as New

- Subscribe to RSS Feed

- Permalink

31 Jan 2022 10:15 PM

@AlanZ that's an interesting request. I get where you are going and it would be kind of cool but i feel like once you start to do that you might regret it. Dynatrace doens't do a great job of tag organization or display and because of the 5+ tags you'd need to add to each entity, it might become difficult to manage. Plus DT doesn't yet allow for Editing of tags, you have to destroy then re-create.

I'm also thinking, kind of on the spot, that we all like configurations to stay somewhat centralized and applied broadly vs individually. Thinking this would get really hard to control, again because DT doesn't have a great interface to manage tagging.

I'm the last person to step in the way of innovation. But I do request if @r_weber builds this in to please make it optional.

I do like your idea of "problem generation-yes/no" though. Wondering though if there is a different way to obtain that same result. I could see some benefits to this one.

- Mark as New

- Subscribe to RSS Feed

- Permalink

01 Feb 2022 09:01 AM

thanks for the ideas and the feedback!

I agree that some customization of the checks via tags makes sense and it should be an optional fallback or override. E.g. a tag "proxy: host.domain:port" on a synthetic monitor could be used - if present - to connect via proxy. the tag "expiry: 30" coule be used to override the default setting in the endpoint configuration.

I can see the benefit of that especially in terms of user permission control in larger environments, where an admin might want to define the standard check configuration and a individual synthetic monitor owner might want to override this standard with his own thresholds.

Of course you can freely combine this with the approach you mentioned earlier @ct_27 where you have different configurations based on different tags.

- Mark as New

- Subscribe to RSS Feed

- Permalink

09 Feb 2022 11:22 PM

I just put one Activegate working with both HTTP monitors AND this extension. Well, really, with several other extensions I have in it. You can get some tips on how to do this in this thread:

Please don't forget that this is not officially supported by Dynatrace, so be careful with it's usage!

- Mark as New

- Subscribe to RSS Feed

- Permalink

21 Jan 2022 04:03 PM

Just want to share that I have created a new build of the plugin (same version) with Python 3.8. It was not working on a recent activegate since the update from 3.6 to 3.8.

I have uploaded my build here: https://github.com/360Performance/dynatrace-plugin-certcheck/issues/4

- Mark as New

- Subscribe to RSS Feed

- Permalink

31 Jan 2022 07:43 PM

We have implemented this today as a POC for now. I noticed at the synthetic level there is also an option to do a ssl cert expiry check and fail if it expires within the next X days. Just so I can understand things more completely here, what would be some key benefits to using this AG extension rather than using this option at the synthetic level.

A few things that come to mind include not having to toggle this setting on for each synthetic, this extension will dynamically pick up new synthetics. In our env we could put in 1 tag and monitor all of them and any new ones created although undoubtedly some teams will want a different value for their days check then others so we may end up creating multiple endpoints. The other benefit I see is the AG extension simply creates an event and does not fail the synthetic like what using this setting at the synthetic level will do. Some of our teams have the availability % of their synthetic tied into a report and sent out to mgmt each month. We don't actually care to fail the synthetic in this case because the site is actually up, the notification (no synthetic failure) of an upcoming expiry is better.

Appreciate any feedback here and great job with this extension! I am though just tying to weigh both options here.

- Mark as New

- Subscribe to RSS Feed

- Permalink

31 Jan 2022 10:02 PM - edited 31 Jan 2022 10:06 PM

@sivart_89 I have to say, this extension is AMAZING. We try to stay as close to native functionality as we can but in this case, the extension provides so many more benefits over native.

1) Cost, we have nearly 2,000 cert checks (yes, we have that many purchased certs). This extension lets us manage them in DT but run far more robust cert checks at no charge (unless you turn on the extensions optional metric recorder, which we do).

2) We check certs every 24 hours. DT's solution only allows a max of 60 minutes between checks, which again, you'd have to now pay for because you're using DTs native solution.

3) Problems won't flutter....this extension not only opens a far more informative problem but it then self-checks every minute on the problems it opened to see if the cert issue has been resolved or not. If not, it keeps the same problem open for days, like it should. DT allows only for a max of 2 hours for a problem to exist before it closes it automatically (or marks as frequent issue [i think this is design flaw they hid behind frequent issue]).

4) This one updates how many days until the cert expires, Dynatrace just tells you it will expire in whatever the threshold value is (30 days or whatever)

5) The Event details and the Problem details tell you far more information like # of days from current date, CommonName, Issuer, NotAfter, NotBefore. It goes negative in days if the cert expired XXX days ago.

We actually have a few "SSLxx" profiles pre-setup. the XX is how many days till expiration to alert. This lines up with the TAG on the Synthetic. So if someone says instead of 30 days i want to be alerts in 15 days we just change the tag from SSL30 to SSL15. And now it auto adjusts to the new threshold.

WishList: I wish there were a way to do a Warning and a Critical alert. I have multiple ideas on ways to do this but the most user friendly would be a checkbox to activate "Warning Level" then allow the user to set another value for when to trigger a Warning alert if it goes below said value. It's up to the user to not be stupid and enter 15 for warning and 30 for critical.

Wishlist#2: ability to handle wildcard certificates. No idea how to suggest this but know it's something we need.

- Mark as New

- Subscribe to RSS Feed

- Permalink

01 Feb 2022 11:29 AM

Hi @ct_27 ,

regarding the wildcard certificates...what wold be your expectation here. It shouldn't bee too difficult to read the SAN extension and report (separately) if the hostname of the monitor matches the alternative hostname list.

Currently the plugin only checks the expiry date of the certificate but doesn't verify a hostname match (This is explicitly disabled on the SSL connection test to allow the use of the plugin on self-signed certificates as well without the need of importing a CA for the plugin).

I could think of doing a manual (optional) hostname validation against the CN and SAN (wildcard) of the certificate and report this as a separate error ("Certificate doesn't match hostname") additionally to the expiry alert.

Would that help?

kr,

Reinhard

- Mark as New

- Subscribe to RSS Feed

- Permalink

03 Feb 2022

08:41 AM

- last edited on

28 Mar 2023

07:47 PM

by

![]() Ana_Kuzmenchuk

Ana_Kuzmenchuk

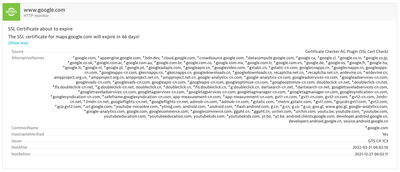

Hi @ct_27 ,

I looked into the wildcard certificate topic and added a few extra info on the problem description. Actually, as the plugin doesn't do any certificate common name and alternative name verification against the hostname used in the synthetic monitor you can already use it to check the expiry date of any certificate, wildcard or not.

It will not report any hostname mismatch as problem.

However this could be added. For now I just added a custom info field (HostnameVerified) that tells you if the hostname matches either the CN or the SAN list:

e.g. google uses a wildcard certificate:

kr,

Reinhard

- Mark as New

- Subscribe to RSS Feed

- Permalink

01 Feb 2022 08:02 AM

Hi @sivart_89 ,

thanks for giving it a try and for the kudos! I have created the plugin out of need before Dynatrace was able to do any certificate duration validation. Shortly after I released this Dynatrace added the basic functionality of checking expiry dates as well.

But as you have found, typically you do not want to fail a synthetic monitor when the certificate is about to expire as it usually doesn't impact any availability or users until it really expires. Hence my approach.

Another reason for my approach was the frequency of the check. While it might make sense to check a site every minute it certainly isn't required for things like certificates.

The third reason (not yet implemented) was to perform extended checks on certificates like cipher strength and so on (i might never do it but it is possible).

kr,

Reinhard

- Mark as New

- Subscribe to RSS Feed

- Permalink

03 Feb 2022 03:23 PM

@ct_27 @sivart_89 @Bert_VanderHeyd @AlanZ

Good news! I've added most of your suggestions to the latest version of my plugin . This includes:

- overriding global endpoint configuration for expiry and proxy connections with tags on synthetic monitors

- validating the hostname used for the SSL connection against common name and alternative names found on the certificate. Note that this only posts a custom info on a problem but wouldn't open a problem if the certificate is not about to expire.

Enjoy and as always feedback is welcome!

- Mark as New

- Subscribe to RSS Feed

- Permalink

03 Feb 2022 04:35 PM - edited 03 Feb 2022 04:37 PM

Thank you. I'm scheduled to meet with co-worker later today. We'll deploy the new code and I'll get his input regarding wildcard stuff.

I never thought of "this enables non-admin Dynatrace users with no access to global plugin configuration to somewhat customize their checks". So @AlanZ idea is a really good one. Thanks for implementing it and thanks @AlanZ for the great suggestions.

- Mark as New

- Subscribe to RSS Feed

- Permalink

04 Feb 2022 10:07 PM

Well the real thanks goes to whomever though of tags in the first place. Maybe "tag configuration" will catch up for other features 🙂

- Mark as New

- Subscribe to RSS Feed

- Permalink

04 Feb 2022 11:08 PM

Funny you mention that. I created an RFE actually asking Dynatrace to add a tag context type of [CONFIGURATION]. I agreed, hopefully it catches on.

- Mark as New

- Subscribe to RSS Feed

- Permalink

04 Feb 2022 09:56 PM

@r_weber i love it! regarding limiting the endpoint to a single synthetic via a tag. does this field except a key value pair for the tag? or just a key?

- Mark as New

- Subscribe to RSS Feed

- Permalink

04 Feb 2022 10:11 PM

@sivart_89 it would be a single key tag only e.g. “sslcheck”

- Mark as New

- Subscribe to RSS Feed

- Permalink

08 Feb 2022 11:51 PM

First of all, let me congratulate you for this extension!

I have just installed it and getting the following error when configuring for the first time:

Error(No module named '_cffi_backend')

I have checked the code, and cffi_backend doesn't seem to be included... Any ideas?

- Mark as New

- Subscribe to RSS Feed

- Permalink

09 Feb 2022 12:17 AM

I should have read this before:

https://github.com/360Performance/dynatrace-plugin-certcheck/issues/4

I'm using it with an 1.229.168 Activegate. Will be trying on a newer one later today...

- Mark as New

- Subscribe to RSS Feed

- Permalink

09 Feb 2022 08:01 AM

Hi @AntonioSousa ,

maybe I should consider building the newest version still for older AGs while these are still around. Alternatively you could use one of the older releases, which are built for Python 3.6 (missing only minor config-via-tag feature).

- Mark as New

- Subscribe to RSS Feed

- Permalink

09 Feb 2022 09:08 AM - edited 09 Feb 2022 09:08 AM

Thanks @r_weber ,

I considered switching to an older version, but didn't even try, as I believe, given the way Dynatrace implements versioning in Activegate extensions, it wouldn't let it work after having installed it in a newer version.

I'm going to compile it locally in my 3.6 dev environment.

- Mark as New

- Subscribe to RSS Feed

- Permalink

09 Feb 2022 10:20 PM

Worked as expected: Had to increment version, recompile & deploy. Worked afterwards with no issue ![]()

- Mark as New

- Subscribe to RSS Feed

- Permalink

10 Feb 2022 02:03 PM

When the extension checks for already open problems, how is it doing that? I ask because my initial testing a few weeks ago I had this check a synthetic, problem created with no issues. I manually closed out that problem because I was working through vetting out this extension before rolling out to our customers, and simply trying to understand it more (synthetic i used was not mine so I didn't yet want the app team to see the problem is why i closed the problem). Anyways, that same synthetic will now not open a new problem even though the cert is going to expire in 12 days, check is doing 15 days. I pulled the below from the AG logs and do see where there is an error while trying to get the synthetic hosts so maybe that is the issue? The 14082 minutes lines up with when the original problem was created, back on 1/31. Replaced the URL with xxxx.

Ideally yes we should not be closing out problems manually especially when the problem is not actually resolved 🙂 I was simply testing here and now don't know how to get things back to where a problem will be created, aside from removing and installing the extension back.

2022-02-10 13:55:01.758 UTC INFO [Python][6276281846038309027][SSL_Certificate_Check][139788694841088][ThreadPoolExecutor-0_1] - [getMonitorsWithOpenEvents] A problem for SS9 D01 is already open for 14082 minutes

2022-02-10 13:55:01.993 UTC ERROR [Python][6276281846038309027][SSL_Certificate_Check][139788694841088][ThreadPoolExecutor-0_1] - [getSSLCheckHosts] Error while trying to get synthetic hosts

2022-02-10 13:55:01.994 UTC INFO [Python][6276281846038309027][SSL_Certificate_Check][139788694841088][ThreadPoolExecutor-0_1] - [query] Refreshing open problems for: ['xxxxxx']

2022-02-10 13:55:02.062 UTC INFO [Python][6276281846038309027][SSL_Certificate_Check][139788694841088][ThreadPoolExecutor-0_1] - [getCertExpiry] Certificate for xxxxxxx (MonitorID: SYNTHETIC_TEST-356B7E801199A8CC) expires in 12 days: ERROR

- Mark as New

- Subscribe to RSS Feed

- Permalink

10 Feb 2022 02:42 PM

Hi @sivart_89

that is interesting. it actually checks the problem opening error events. Those should also clear upon manual close of a problem.

Can you try to set the treshold to 10, so that the check turns into OK on the next run and then set it back to 15?

- Mark as New

- Subscribe to RSS Feed

- Permalink

11 Feb 2022 11:54 PM

That did work, setting the cert expiry threshold to a lower number, one where the extension will see that the cert expiry date is within the threshold and subsequently mark the event as closed. Any clue why this would be needed? We plan to use 1 endpoint and anyone that wants to use this check will add the tag to their synthetic. There will unfortunately be a time where somewhere will close the problem manually even though the issue is not actually addressed (won't happen on the regular i hope but it will happen). This would mean that I would then have to change the threshold to something that is lower to 'force' the extension to mark the event as closed, OR i just change it to something like 1 day then after the clearing, change it back to our standard. Ideally I would love to not have to do this 🙂

Also, through my testing when I do close the ticket manually I see the time value for the event being updated to the time when the problem was closed. As an example, I had a problem get created at 18:15 today and I manually closed it at 18:29. While the problem was open the time value for the event was accurate (showed 18:15) but when I closed out the problem it now shows 'today, 18:29-now'. It shows now because the extension thinks the event is still open. This update of the start time for the event does not occur with problems that close automatically, I've only seen this issue if you manually close the problem.

- Mark as New

- Subscribe to RSS Feed

- Permalink

12 Feb 2022 10:51 AM

Hi @sivart_89 ,

interesting observation. It seems that manually closing the problem doesn’t “clear” the problem-opening event so from the plugin perspective the opening event is just refreshed but Dynatrace doesn’t interpret this as another problem opening event.

I might need to do additional checks for this special case of manual close….or state in the problem description of the problem that manual close equals a ignore of the certificate expiry forever 😉

- Mark as New

- Subscribe to RSS Feed

- Permalink

15 Feb 2022 02:28 PM

Hi @r_weber,

If this is something planned to be fixed then I will wait for the new version before communicating this out to our users.

- Mark as New

- Subscribe to RSS Feed

- Permalink

16 Feb 2022 10:25 AM

Hi @sivart_89 ,

The new version (1.18) now will reopen problems if the error condition is still met after a manual close of a problem. (Please note the API token permission change requirement)

Hope that helps! Enjoy!

kr,

Reinhard

- Mark as New

- Subscribe to RSS Feed

- Permalink

08 Jun 2022 11:47 AM

Since I migrated to AG 1.239, the extension stopped working. Getting the errors below in the gateway logs. Tried some tricks recompiling extension, but with no luck...

2022-06-08 10:39:00.141 UTC [000045ac] severe [native] 139751677454080(ThreadPoolExecutor-0_0) - [set_full_status] No module named '_cffi_backend'

Traceback (most recent call last):

File "/var/lib/dynatrace/remotepluginmodule/agent/runtime/engine_unzipped/ruxit/plugin_state_machine.py", line 336, in _execute_next_task

self._query_plugin()

File "/var/lib/dynatrace/remotepluginmodule/agent/runtime/engine_unzipped/ruxit/plugin_state_machine.py", line 663, in _query_plugin

self._plugin_run_data = self._create_plugin_run_data()

File "/var/lib/dynatrace/remotepluginmodule/agent/runtime/engine_unzipped/ruxit/plugin_state_machine.py", line 636, in _create_plugin_run_data

plugin_module = importlib.import_module(self.metadata["source"]["package"])

File "/opt/dynatrace/remotepluginmodule/agent/plugin/python3.8/importlib/__init__.py", line 127, in import_module

return _bootstrap._gcd_import(name[level:], package, level)

File "<frozen importlib._bootstrap>", line 1014, in _gcd_import

File "<frozen importlib._bootstrap>", line 991, in _find_and_load

File "<frozen importlib._bootstrap>", line 975, in _find_and_load_unlocked

File "<frozen importlib._bootstrap>", line 671, in _load_unlocked

File "<frozen importlib._bootstrap_external>", line 843, in exec_module

File "<frozen importlib._bootstrap>", line 219, in _call_with_frames_removed

File "/opt/dynatrace/remotepluginmodule/plugin_deployment/custom.remote.python.certcheck/certcheck.py", line 28, in <module>

from OpenSSL import SSL

File "/opt/dynatrace/remotepluginmodule/plugin_deployment/custom.remote.python.certcheck/OpenSSL/__init__.py", line 8, in <module>

from OpenSSL import crypto, SSL

File "/opt/dynatrace/remotepluginmodule/plugin_deployment/custom.remote.python.certcheck/OpenSSL/crypto.py", line 11, in <module>

from OpenSSL._util import (

File "/opt/dynatrace/remotepluginmodule/plugin_deployment/custom.remote.python.certcheck/OpenSSL/_util.py", line 5, in <module>

from cryptography.hazmat.bindings.openssl.binding import Binding

File "/opt/dynatrace/remotepluginmodule/plugin_deployment/custom.remote.python.certcheck/cryptography/hazmat/bindings/openssl/binding.py", line 14, in <module>

from cryptography.hazmat.bindings._openssl import ffi, lib

ModuleNotFoundError: No module named '_cffi_backend'

2022-06-08 10:39:01.058 UTC [000044b7] info [native] 139751206131456(MainThread) - [one_plugin_loop_step] plugin <RemotePluginEngine, meta_name:custom.remote.python.certcheck id:0x7f1a39a37580> threw exception No module named '_cffi_backend'

- Mark as New

- Subscribe to RSS Feed

- Permalink

08 Jun 2022 12:06 PM

Seems like a problem on my side. I'll comment when solved.

- Mark as New

- Subscribe to RSS Feed

- Permalink

08 Jun 2022 12:22 PM

Yep, it was a false alarm. I had previously had to recompile the extension, and for some reason it was incorporating a Python 3.6 file, instead of the correct one. Recompiled again, with your new code, and it works 🙂

- Mark as New

- Subscribe to RSS Feed

- Permalink

08 Jun 2022 01:21 PM

Are you aware of any problem that this extension might have running on a Windows Activegate?

- Mark as New

- Subscribe to RSS Feed

- Permalink

08 Jun 2022 01:25 PM

Hi @AntonioSousa, I've used it successfully on a Windows-based AG as a test.

- Mark as New

- Subscribe to RSS Feed

- Permalink

08 Jun 2022 01:29 PM

Thanks! Currently have two separate environments, installed independently, giving the following error:

Error(cannot import name 'x509' from 'cryptography.hazmat.bindings._rust' (unknown location))

Trying to figure it out 😉

- Mark as New

- Subscribe to RSS Feed

- Permalink

08 Jun 2022 01:43 PM

I see the same now; my previous attempts were on older AG versions I think, not the latest where it is using Python 3.8.

- Mark as New

- Subscribe to RSS Feed

- Permalink

08 Jun 2022 03:12 PM

Thanks for confirming. Still haven't got the solution. Attaching more logs, might be easier for @r_weber or others...

2022-06-07 15:06:54.488 UTC [00001330] severe [native] 4912(ThreadPoolExecutor-0_0) - [set_full_status] cannot import name 'x509' from 'cryptography.hazmat.bindings._rust' (unknown location)^M

Traceback (most recent call last):^M

File "C:\ProgramData/dynatrace/remotepluginmodule/agent/runtime/engine_unzipped\ruxit\plugin_state_machine.py", line 336, in _execute_next_task^M

self._query_plugin()^M

File "C:\ProgramData/dynatrace/remotepluginmodule/agent/runtime/engine_unzipped\ruxit\plugin_state_machine.py", line 663, in _query_plugin^M

self._plugin_run_data = self._create_plugin_run_data()^M

File "C:\ProgramData/dynatrace/remotepluginmodule/agent/runtime/engine_unzipped\ruxit\plugin_state_machine.py", line 636, in _create_plugin_run_data^M

plugin_module = importlib.import_module(self.metadata["source"]["package"])^M

File "C:\Program Files/dynatrace/remotepluginmodule/agent/plugin/python3.8\importlib\__init__.py", line 127, in import_module^M

return _bootstrap._gcd_import(name[level:], package, level)^M

File "<frozen importlib._bootstrap>", line 1014, in _gcd_import^M

File "<frozen importlib._bootstrap>", line 991, in _find_and_load^M

File "<frozen importlib._bootstrap>", line 975, in _find_and_load_unlocked^M

File "<frozen importlib._bootstrap>", line 671, in _load_unlocked^M

File "<frozen importlib._bootstrap_external>", line 843, in exec_module^M

File "<frozen importlib._bootstrap>", line 219, in _call_with_frames_removed^M

File "C:\Program Files/dynatrace/remotepluginmodule/plugin_deployment/custom.remote.python.certcheck\certcheck.py", line 28, in <module>^M

from OpenSSL import SSL^M

File "C:\Program Files/dynatrace/remotepluginmodule/plugin_deployment/custom.remote.python.certcheck\OpenSSL\__init__.py", line 8, in <module>^M

from OpenSSL import crypto, SSL^M

File "C:\Program Files/dynatrace/remotepluginmodule/plugin_deployment/custom.remote.python.certcheck\OpenSSL\crypto.py", line 8, in <module>^M

from cryptography import utils, x509^M

File "C:\Program Files/dynatrace/remotepluginmodule/plugin_deployment/custom.remote.python.certcheck\cryptography\x509\__init__.py", line 6, in <module>^M

from cryptography.x509 import certificate_transparency^M

File "C:\Program Files/dynatrace/remotepluginmodule/plugin_deployment/custom.remote.python.certcheck\cryptography\x509\certificate_transparency.py", line 10, in <module>^M

from cryptography.hazmat.bindings._rust import x509 as rust_x509^M

ImportError: cannot import name 'x509' from 'cryptography.hazmat.bindings._rust' (unknown location)^M

- Mark as New

- Subscribe to RSS Feed

- Permalink

09 Jun 2022 03:04 PM

@AntonioSousa I see someone else already logged an issue for this:

https://github.com/360Performance/dynatrace-plugin-certcheck/issues/9

I tried to get the necessary installed on my Windows host, but alas it is still not working.

- Mark as New

- Subscribe to RSS Feed

- Permalink

09 Jun 2022 05:49 PM

I have also tried some more tricks, but have not managed to solve the issue.

One thing that does surprise me is the involvement of "certificate_transparency". It shouldn't be needed, and maybe going to an older version of openssl might help. Maybe @r_weber might help diagnosing this?

- Mark as New

- Subscribe to RSS Feed

- Permalink

05 Oct 2022 04:05 AM - edited 05 Oct 2022 04:18 AM

Hi all,

encountering the same issue on Linux AG, it's working well in Dev environment (Rhel version 8 or above) but not in Prod Linux AG (version 7.9).

Once of the dependency in Linux AG is "glibc" version. minimum version required for extension to execute is GLIBC_2.25. Supported version for GLIBC are:

|RHEL 8.4 | RHEL 7.9

|2.28 | 2.17

- Mark as New

- Subscribe to RSS Feed

- Permalink

05 Oct 2022 11:48 AM

Hi @jaysahota ,

please try the _old_gclib build of the plugin. I built this on CentOS 7.9 which should reflect RHEL 7 and the old gclib.

You can find the plugin build in the 1.40 release

- Mark as New

- Subscribe to RSS Feed

- Permalink

11 Jul 2022 01:25 PM

I noticed that a recent fix in the Dynatrace API when reading problems had an impact on the plugins ability to check for open problems correctly. The "fixed" behavior was a string-length limitation in the problem text selector of the API that didn't exist before.

This prevented the plugin from refreshing open problems and problems would automatically time out even though the error condition still existed (the cert was still about to expire).

If you have noticed this behavior please update the plugin to the latest version.

- Mark as New

- Subscribe to RSS Feed

- Permalink

11 Jul 2022 08:45 PM

Thanks a lot, Reinhard, for getting back with the update! ![]()

- Mark as New

- Subscribe to RSS Feed

- Permalink

14 Sep 2022 10:02 AM

New Version 1.30 Released

I have released a new version of my plugin to overcome some limitations that seem to have been introduced with the new feature of on-demand executions. Prior to this version it happened that we couldn't attach problems to disabled monitors and thus you would not get any problem alerts.

Using configured but disabled synthetic monitors for the plugin's SSL check only is an intended way to save on DDU consumption (especially when checking lots of certificates) or where frequent synthetic checks are not required.

This plugin version now fully supports the long-interval checks (24hrs) of certificates also on disabled monitors.

You can find the release here.

Featured Posts