- Dynatrace Community

- Ask

- Real User Monitoring

- Dynatrace RUM “Network time” metric might misguide performance investigation process

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Pin this Topic for Current User

- Printer Friendly Page

- Mark as New

- Subscribe to RSS Feed

- Permalink

10 Sep 2021 12:25 PM

In this post I’d like to start a discussion about the metric specified in https://www.dynatrace.com/support/help/how-to-use-dynatrace/real-user-monitoring/basic-concepts/user... as a Network time. You can find it on various dashboards of the solution. For example:

This metric can be considered as an overall delay of the operation that caused by the network, but this is far not the truth. I dedicated some time to studying of this subject and came to conclusion that Dynatrace RUM, and possibly the other solutions based on W3C navigation timing, are unable to identify how network affects the operation performance. But before going further into technical details let me put couple of words about why this metric is important.

Whenever the front-end web application becomes a target of a performance investigation, one of the first thing that must be clarified is the most probable source of its slowness. Instead of investing hard efforts in a source code optimization it would be not excessive to understand what possible gain we might have. What if the server processing time or the client java scripts execution time represents minor part of overall delay? Does our application use the best practices when downloads or verify external static objects (images, styles or javascripts)? It would be nice knowing reliable client/network/server operation time breakdown before starting complex (and sometimes costly) optimization research.

Unfortunately, I found that we cannot rely at Dynatrace RUM in this case. In the documentation they say:

Network time = (requestStart - actionStart) + (responseEnd - responseStart)

That is far not enough to identify overall network delay of the operation.

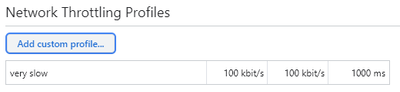

Here is a simple experiment that can be reproduced with various front-end web applications. First, with Chrome DevTools we artificially decrease network speed, increase latency and disable the cache:

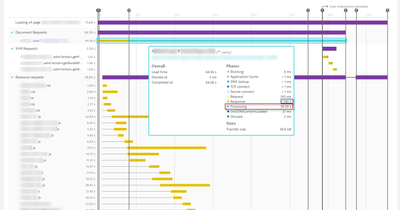

Then open one of the web pages of the business application that is instrumented with Dynatrace RUM monitoring. Here is what we can see at the Dynatrace RUM dashboards:

We can see a lot of heavy static object downloads, and this was the main delay of the operation, but Dynatrace assigns only 7.8 seconds at the “Network time”! I use this artificial example because anyone can repeat that. In reality, we unlikely would have 100 kbps with 1 second RTT. However, I have many practical cases when a great deal of front-end delay was coming from static objects download delay. One example was described in my previous post here, that yet did not receive any attention from the Dynatrace guru (see https://community.dynatrace.com/t5/Dynatrace-Open-Q-A/Dynatrace-RUM-metrics-meaning/m-p/171557).

The mistake can be avoided if we precisely follow up every request waterfall diagram, but this doesn’t give generalized picture that might be very helpful on the early stage of the investigation process.

Technically, Dynatrace already has or can have all the necessary numbers to calculate the Network time properly. Every static object has Request and Response time. We should apply some algorithm to consider parallelization influence. Also, we might need server to client RTT to consider network latency influence within the “Request” time. This all can be done either at OneAgent or at Dynatrace reporting side if the additional calculation load cannot be afforded at the production servers. But maybe I consider this approach too shallow and proper calculation of Network time is totally impossible with W3C resource timing API…

Would be nice to have an official comment from Dynatrace about this point.

…and, yes, I have tried to follow up this issue opening the ticket with Dynatrace support. One month has passed since I opened #8251 and I’ve got no constructive reply yet. Now Dynatrace closed this ticket offering me the training session 😊

Also, I could not find anyone was triggering this topic neither in Dynatrace forum, nor anywhere else. Maybe I missed something… How do you guys identify performance issues caused by large static objects or their inefficient management at client web browsers?

Solved! Go to Solution.

- Mark as New

- Subscribe to RSS Feed

- Permalink

10 Sep 2021 01:10 PM

I have had this discussion in the past also, and also in the synthetic context. First of all, let me congratulate you with such a thorough investigation. Dragging the speed is something that I figured quite interesting in this analysis.

Trying to figure out the network contribution is always particularly difficult. We have to consider usual network latency, but than how should we count requests that are being made in parallel, while connections to third parties are also being done. It gets highly complicated pretty fast, and putting that into a number WILL always be debatable.

Hopefully, we might get more insight into how it is calculated, so we can understand the metric better first 😊

- Mark as New

- Subscribe to RSS Feed

- Permalink

05 Dec 2021 06:19 AM

Really good point, during my troubleshoot, i avoid to use "network consumption", metrics. like (TTL, TCP, DNS, connection failed, etc). Only one usefull is "size of loading", can make sense if we dont have any cache.

- Mark as New

- Subscribe to RSS Feed

- Permalink

06 Dec 2021 07:29 PM

I think that @Radoslaw_Szulgo just might be the right guy, to shed some light on this story, what do you reckon of this Radoslaw?

- Mark as New

- Subscribe to RSS Feed

- Permalink

07 Dec 2021 08:24 AM

I'll try to bring some experts here to help you 🙂

Dynatrace Managed expert

- Mark as New

- Subscribe to RSS Feed

- Permalink

08 Dec 2021 07:49 AM

Great, thanks! 🙂

- Mark as New

- Subscribe to RSS Feed

- Permalink

05 Aug 2022 02:19 PM - edited 05 Aug 2022 02:19 PM

In short:

> the problem is to aggregate values for multiple requests into 1 value. requests are in parallel, so what do you consider server time, what network time, when both happen at the same time? This is why we have a strange calculation in place, which confuses everyone.

We're aware of the problem - but we don't have this currently evaluated and we don't know how to solve this at best.

Dynatrace Managed expert

Featured Posts