- Dynatrace Community

- Dynatrace

- Ask

- Open Q&A

- Process memory vs. Heap memory

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Pin this Topic for Current User

- Printer Friendly Page

- Mark as New

- Subscribe to RSS Feed

- Permalink

01 Sep 2022

08:14 AM

- last edited on

02 Sep 2022

08:44 AM

by

![]() MaciejNeumann

MaciejNeumann

Dear All,

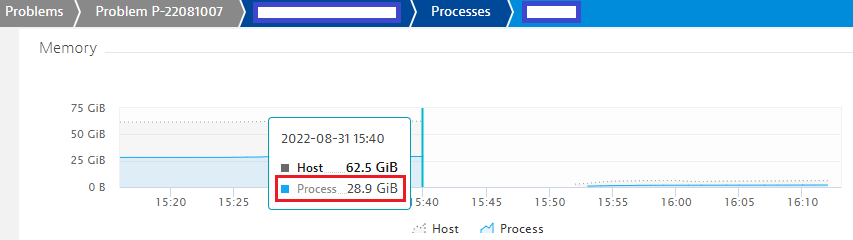

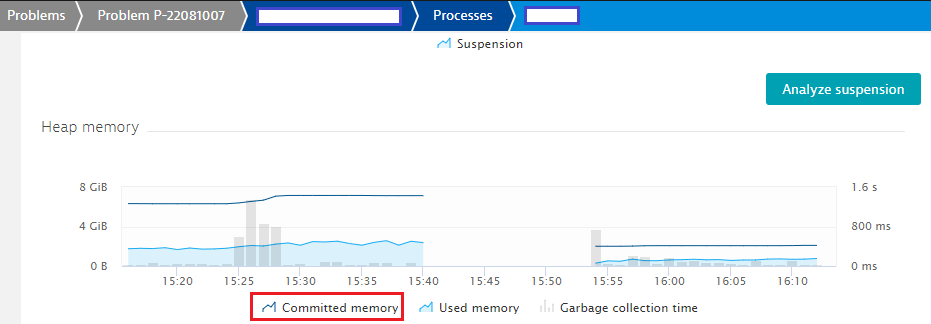

Suppose, a process memory heap size is configured as 8GB, and the process memory utilization is 29G (memory saturation occurred due to this, whereas, the heap size never reached the maximum size).

What does actually it mean?

Regards,

Babar

Solved! Go to Solution.

- Labels:

-

host monitoring

-

process groups

- Mark as New

- Subscribe to RSS Feed

- Permalink

01 Sep 2022 04:46 PM

I'm not an expert by any means, but this looks like a good explanation: https://plumbr.io/blog/memory-leaks/why-does-my-java-process-consume-more-memory-than-xmx. Essentially, the jvm has some additional overhead over the heap.

- Mark as New

- Subscribe to RSS Feed

- Permalink

01 Sep 2022 06:17 PM

Hi Babar,

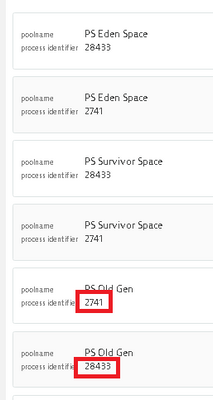

To be honest I have never seen before such porcess memory status. As far as I know commited heap memory at jvm metrics equals all process instance (different process indentifier on the same host) heap size. Do you have only one jvm instance on the VM?

Is it possible to share the command line args in order to check the jvm start parameters?

Br, Mizső

- Mark as New

- Subscribe to RSS Feed

- Permalink

02 Sep 2022 10:40 AM

Hi,

Please mind that the sum of all the Java memory pools will never be the total amount of memory the java process consumes from the OS. The JVM allocates also native memory for all the thread stacks, GC, symbols or native byte buffers (NIO).

Also consider that not all memory pools are heap pools. So please have a look at the detailed JVM screen where all pools are listed separately. Maybe the metaspace is getting too large.

It is also possible that an application is using native parts via JNI where custom native programs consume native memory that is not part of the heap.

Last but not least, it is also possible the program parts or the JVM itself does leak native memory. We have seen this on AIX for old JVMs.

best

Harry

- Mark as New

- Subscribe to RSS Feed

- Permalink

02 Sep 2022 04:32 PM

You are probably in a scenario where memory leaks are occurring.

I normally follow it's behavior after a restart, and if it keeps allocating system memory over time, you are probably in for a leak detection. These are difficult scenarios to debug, but if you follow it over time, you will probably better doing a memory dump before it creates problems (like at 25GB) and then trying to check where the leak, or whatever is causing memory consumption to rise. From past experiences, not an easy task...

- Mark as New

- Subscribe to RSS Feed

- Permalink

02 Sep 2022 04:33 PM

BTW, this is something that happens quite frequently. One of the main reasons for having to do applications restarts after some time...